In mid-2021, Tesla removed RADAR from its Model Y and Model 3 fleet to transit to its ” Tesla Vision” self-driving approach. My 2021 Model Y Long Range, delivered on the last day of 2020, was among the last that still has this sensor, which supposedly plays a part in Tesla’s hyped-up “driving automation.”

My take is Tesla Vision might have been Elon Musk’s excuse for Tesla to keep shipping its cars even during a chip shortage—each sensor requires a computer chip—or to cut costs. I wouldn’t be surprised if the company changed its mind and added RADAR back or even more types of sensors to its future cars.

The thing is, even with RADAR, existing Tesla cars will never get to the level of actual autonomous driving—as Elon Musk promised with an expensive ” Full Self-Driving” add-on. It’s just not possible.

Unlike the feature’s intentionally vague name, driving automation is a science with well-defined levels. Let’s find out what they are, and I’ll add my own experience of what Tesla has been doing with its cars.

Driving Automation Explained: The concept

The whole concept of driving automation begins with the definitions of “driving” and “automation.”

Since how a machine “drives” is very different from how a human does it, engineers need to subdivide the task into multiple parts, including moving straight, moving sideways, braking, etc.

Before a vehicle can handle more than one of those tasks simultaneously, it also needs to understand the road correctly, which makes things even more complicated and challenging in terms of engineering and technology.

And that brings us to a few unavoidable technical terms and their acronyms, including Automated Driving System (ADS), Dynamic Driving Task (DDT), Object and Event Detection and Response (OEDR), and Operational Design Domain (ODD).

The technical acronyms of autonomous driving

Since most of us don’t want to spend time reading boring stuff, I have tried to translate these terms, per my understanding, into something easier to digest.

The cabinet below will give you the highlights. The information will be technical but not to the point of giving you a headache. Or you can skip it.

Standard technical terms for driving automation

Below are a few standard technical terms used to determine how a vehicle handles driving automation.

Automated Driving System (ADS)

ADS is the general term to call a vehicle with self-driving capability at any level.

Dynamic Driving Task (DDT)

DDT is what we call driving or the act of controlling a vehicle’s motions. It’s everything involved in getting the car to move from point A to point B.

Technically, DDT includes all the operational and tactical functions required to operate a vehicle in on-road traffic, such as acceleration, deceleration, braking, steering, turning, and stopping.

A well-trained human driver can handle all of those, but a machine might only have partial DDT. So, going a bit deeper, when it comes to autonomous, we have these sub-terms as part of the dynamic driving task:

- Lateral Motion Control: The control of motion in directions, turning, veering, etc.

- Longitudinal Motion Control: The control of motion in terms of linear distances: acceleration, declaration, braking, etc.

Object and Event Detection and Response (OEDR)

OEDR is the base for self-driving — the awareness of the environment and appropriate real-time reactions.

(For a human, that’s what we often refer to as hand-eye coordination. We need our feet, too, but you get the idea.)

Specifically, OEDR includes monitoring the driving environment—detecting/recognizing/classifying objects and events—and executing appropriate and timely responses.

Operational Design Domain (ODD)

ODD is the pre-defined driving environment.

Specifically, ODD means the specific conditions under which a specific automated vehicle (ADS) is designed to function. Out of that, its automation might fail.

This part is like a person might be very good at driving in an urban area but can’t drive offroad. In this case, the former is their ODD.

The more broadly defined ODD an ADS can handle, the more advanced it is. For the most part, ODD determines an ADS’ limitations and when (human) intervention (DDT fallback) is required.

A perfect ADS has no ODD limitation.

DDT fallback

The extra intervention an automated driving system (ADS) might require. Generally, this is where a human driver needs to take over.

A perfect ADS handles DDT fallback by itself (or with no fallback)—it requires no outside intervention.

In any case, what we need to remember is this: automated driving is complicated.

On top of that, like any skill, it’s available at different levels.

Driving Automation Explained: The 5 autonomous levels

According to the Society of Automotive Engineers (SAE), there are five levels of driving automation (LoDAs), ranging from LoDA 1 to LoDA 5.

The cabinet below shows how the engineers define these levels—it is only applicable if you have already read the technical terms above.

LoDAs in brief

| Level of Driving Automation | What it can do |

|---|---|

| LoDA 1 (Driver assistance) | Automation performs either longitudinal or lateral sustained motion control—dynamic driving task (DDT) at the lowest level—with zero object and event detection and response (OEDR) |

| LoDA 2 (Partial driving automation) | Automation performs longitudinal and lateral sustained motion control—DDT at a higher level—with little (or zero) OEDR. |

| LoDA 3 (Conditional driving automation) | Automation performs the complete dynamic driving task (DDT), but not DDT fallback, within a limited operational design domain (ODD) |

| LoDA 4 (High driving automation) | Automation performs the complete DDT and DDT fallback within a limited ODD |

| LoDA 5 (Full driving automation) | Automation performs the complete DDT and DDT fallback without ODD limitation. |

To simplify these levels, though, we’ll need to add a pre-automation level. Let’s call it level 0 (LoDA 0).

Driving automation level 0: No automation (at all)

Level 0 is the case of vehicles that require total manual control.

These vehicles might not be completely manual—there are driving-related systems, such as emergency braking or an auto signal light, to help the driver.

But the gist of this level is that the human behind the steering wheel does the driving, and any available assistance system does not involve the driving itself.

In short, with LoDA 0, a human drives the vehicle entirely at all times.

Driving automation level 1 or Driver Assistance

This level is where the driving automation begins.

This level is available in many cars that have cruise control, where the vehicle can keep itself at a fixed speed, or better yet, adaptive cruise control, where it can keep itself a safe distance from a moving object in its front.

At this level, the human driver has to handle other aspects of the driving, including braking and steering. Again, level 1 has zero environmental awareness or object and event detection and response (OEDR).

In short, with driving automation level 1, a human driver is required at all times.

Driving automation level 2 or Partial Driving Automation

Level 2 includes the automation of level 1. On top of that, the vehicle can also control steering and the speed of the car.

This level is often called “Super Cruise Control” or advanced driver assistance system (ADAS). So, level 2 can take care of a big part of the driving job and has a primitive object and event detection and response.

In short, with driving automation level 2, a human driver is required most of the time.

Driving automation level 3 or Conditional Driving Automation

Level 3 is a conditional self-driving. The car can drive itself, for the most part, in ideal or near-ideal conditions. Out of that, the driver has to take over.

That said, level 3 can self-drive and has a high level of environmental awareness, but it can only work in limited environment settings.

In short, with driving automation level 3, a human driver is required at critical times.

Driving automation level 4 or High Driving Automation

Level 4 is similar to level 3 with one significant improvement: The ADS can now take care of stations when things don’t go as planned.

As a result, with level 4, a human driver is generally not needed, but the human manual override/driving option is readily available and might come in handy.

This level is where actual autonomous driving begins.

Driving automation level 5 or Full Driving Automation

This level is the holy grail of driving automation.

The vehicle drives itself entirely in all conditions. It completely takes over the driving job, has perfect environmental-awareness-based responses, and might not even have traditional controllers like the steering wheel and brake/accelerating pedals.

In short, with level 5, the human is the passenger.

To sum up, driving automation has a lot of degrees and nuances, even within each level itself.

But it includes two inseparable essential parts: The act of driving—or the dynamic driving task (DDT)—and the handling of the ever-changing real-time environment—or object and event detection and response (OEDR).

Generally, the former is impressive and relatively easy to program—it’s what we humans can experience. The latter is where things get very complicated—it happens behind the scenes.

That’s because the way a machine “sees” the environment is different from how we humans do it. It can be better at certain things and worse at others.

With that, let’s see how a self-driving car manages the road.

Driving Automation Explained: The hardware

To self-drive, an autonomous vehicle uses object-detecting sensors to read the environment around it. Currently, there are four types, including Ultrasonic, RADAR, LiDAR, and cameras.

Ultrasonic sensor (or Sonar)

Ultrasonic is by far the most popular sensor in cars. They are the tiny round dots you see around the body and the reason the car beeps when you get close to an object, like another car or a wall.

An ultrasonic sensor emits high-pitched sound waves (higher than a human can hear) and waits for them to bounce back after hitting an object. It then measures the latency using the speed of sound to find out the distance between itself (therefore, the car) and the thing.

Ultrasonic sensors are cheap and reliable but have one major drawback: the short range.

Underwater, sonar can detect objects from tens (if not hundreds) of miles away because sound travels effectively in water—the environment is thick and bouncy with tightly packed water molecules.

The air particles are much farther apart—so we can breathe!—and that limits the range of ultrasonic soundwaves.

As a result, this type of detector can “see” objects no farther than 10 feet (3 meters) away. It’s only good for detecting close objects, suitable for parking or blind spots, and not a reliable sensor for driving automation.

RADAR

RADAR is short for radio detection and ranging system. It works similarly to sonar but uses radio waves instead of sound—it’s just like Wi-Fi.

As such, the signal can go much farther than Ultrasonic, though still not far enough, without being affected by the weather (snow, rain, dust, etc.).

A front-mounted RADAR sensor on a car can detect objects up to some 200 feet (70 meters) ahead—enough to slow down or apply the brakes on time.

Still, RADAR is far from perfect for autonomous driving—it has a long list of shortcomings, but the main ones are:

- It takes longer (than Ultrasonic) to lock on an object.

- It can’t differentiate objects accurately, nor can it handle multiple objects well—it has very low resolutions, so to speak.

- It can’t see colors or detect the composition of an object.

As a result, RADAR is never intended to be a complete detection system for self-driving, but rather, an additional detector.

LiDAR

LiDAR is short for light detection and ranging system. (Or you can think of it as Light + RADAR.)

LiDAR shares the same concept as RADAR, but now it uses light (laser) instead of radio waves.

A LiDAR sensor, generally placed on a vehicle’s roof, emits millions of pulses of light (out of the spectrum humans can see) in a pattern and builds a high-resolution 3D model of the surroundings.

As a result, a LiDAR system can detect small objects and differentiate them with extreme accuracy. It can tell a bicyclist from a motorcyclist or even a person riding a skateboard with a helmet (or without one).

But LiDAR itself also has shortcomings:

- It can’t handle weather as well as RADAR.

- The sensor, for now, is ostentatiously big and very expensive.

- It can’t see colors.

So, one thing is for sure: LiDAR alone is not enough for a self-driving car, though it can replace RADAR.

Cameras

Cameras are by far the most important in autonomous driving because they are the only sensors on this list that can see anything—just like our eyes.

For a car to drive by itself, it needs to read signs, understand traffic lights, and so on. A camera can also see far, and when multiple units are in use, they can also build a 3D model of the environment—again, just like our eyes.

Cameras have been available in cars for a long time, initially to help with parking and driving in reverse. In this case, it’s still the human driver who interprets what a camera can see. And that’s simple.

For a car to drive itself, it must be able to understand the object by itself. And that’s a whole different ball game. The car now needs a brain, too—again, just like what we have behind our eyes.

Unlike a human who can generally identify objects instantly, a car—or its computer, that is—wouldn’t know if red means stop and green means go or if a big hole on the road is to avoid. And that’s the simple stuff.

Making a machine able to see things and interpret them accurately (per human standards) is extremely hard.

For example, knowing that you must yield to a (fallen) bicycle but can run over a life-size painting of one on the road—often the sign for a bike lane—requires a lot of programming.

So a self-driving car needs to be trained via a network with a vast amount of accumulated data to interpret the environment and react correctly the way we want it to, in real-time. This type of artificial intelligence (AI) is no easy task—real life is fluid and full of unpredictability.

Select all cat images below!

You might have encountered CAPTCHA challenges, where you have to prove that you’re a human and not a bot on a website.

In this case, you’re often asked to pick different pictures of the same object. By doing so, you might help train some system somewhere on object detection.

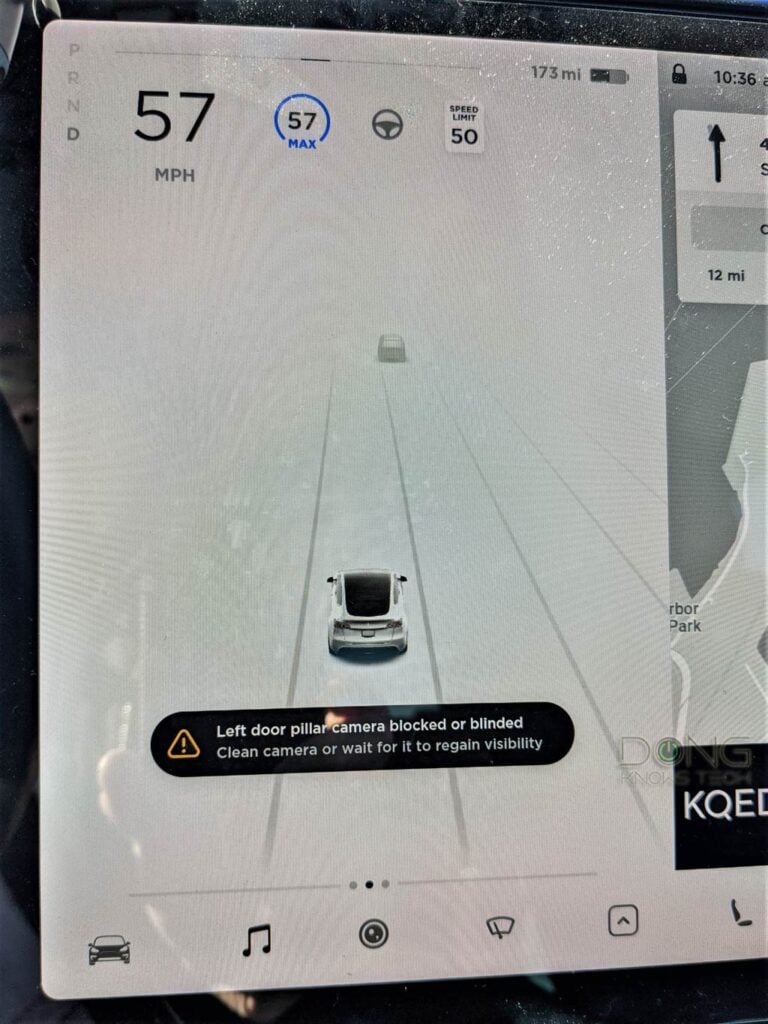

Cameras also have this major drawback: they need a line of sight and can, therefore, be easily blocked or obstructed.

So, cameras are also not reliable at all times. There’s no way out of that.

The point is that there’s no one-sensor-fits-all solution for self-driving. All autonomous cars need as many (types) of sensors as possible, working in tandem, to drive themselves accurately.

Or do they? Tesla’s Elon Musk has suggested otherwise. And that brings us to Tesla and its never-ending game of Full Self-Driving chicken.

Driving Automation Explained: Why you can’t count on existing Tesla cars

For years, Tesla has been known for its hyped-up self-driving capability via the controversially (and rather dangerously) named features, including “Autopilot” and “Full Self-Driving.”

In my experience, the former is an intelligent Cruise Control feature, and the latter adds a few gimmicks on top of that. They are much better than most existing gas cars’ Adaptive Cruise Control, but neither lives up to its name.

And here’s a fact: in all Teslas, the driver must be present and prepared to take over at any time. So, technically, none is qualified as real driving automation.

And that’s because autonomous driving is challenging, and (existing) Tesla vehicles don’t (yet?) have enough hardware, data, or intelligence for the job. The world needs more time on this front.

Just because Tesla is ahead of the game—if it’s actually so—doesn’t mean it’s already at the finish line. And it’s not even close.

What Tesla uses for its imperfect self-driving feature

Tesla is the only big car company that doesn’t use LiDAR, so far. In 2019, Elon Musk famously said, ” LiDAR is a fool’s errand.”

But Elon has said many other things. For example, according to him, the Full Self-Driving feature that Tesla charged an arm and a leg for would be finalized in 2018. Right now, in late 2021, it’s still in beta at best.

And it gets worse. To get into the new beta version, you have to be qualified as “a good driver” via Tesla’s Safety Score System, despite the fact you already paid for the feature itself. But that’s fair enough.

The real issue is that the latest beta is terrible. It has tons of problems with the “phantom braking”—that’s when the car abruptly slows down significantly for seemingly no reason—occurring more frequently. It feels like an “Alpha” version.

The point is, take what Elon says with a grain of salt!

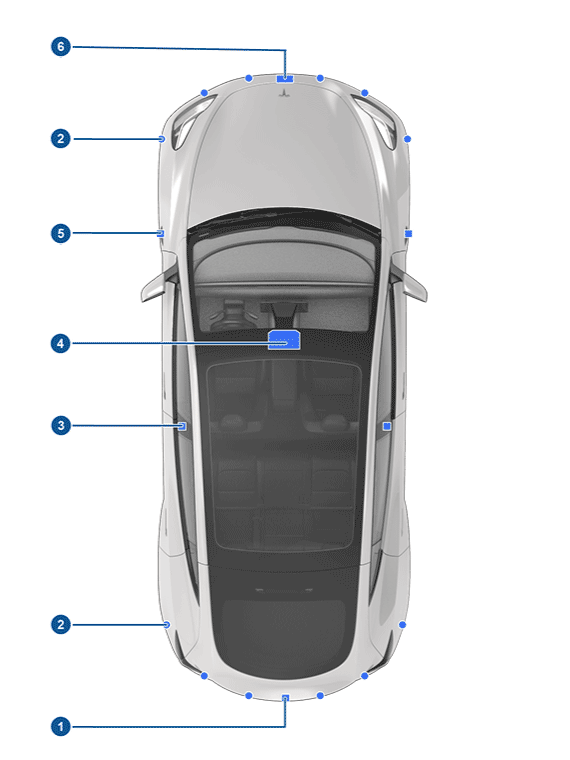

Before May 2021, all Tesla cars—at least those of the model year 2018 and later—come with a RADAR sensor mounted on its front, 8 cameras, and 12 Ultrasonic sensors around their body.

With that number of sensors, the Autopilot feature is generally impressive on a freeway—matching level 2 of driving automation (LoDA 2) mentioned above. And if you upgrade/subscribe to Full Self-Driving, you’ll get a bit of level 3—to some extent, the car now handles lane changing and even turning on streets.

Apart from my model Y, I’ve tried Autopilot and FSD on a few Model S, X, and 3, too. They were all similar, if not the same, as I described in the piece about FSD. Tesla’s self-driving feature sure is useful, mostly on freeways, but you can’t count on it 100%.

That’s because, in its current state, a Tesla’s self-driving is still quite bad at avoiding small objects on the road, such as potholes or even a piece of a broken tire.

Even worse, it generally doesn’t accurately recognize stationary objects, even large ones, including parked cars.

My take is that it’s a matter of resource management. It’s in the software.

If Tesla makes the car aware of all non-moving objects, that would be too much information for the car to process in real-time. There are just too many trees, houses, overpasses, large traffic signs, and so on and so forth.

That’s not to mention the stuff we humans can’t see. And maybe that’s the reason behind the “phantom braking” issue—the car sees something it perceives as a danger that we don’t. Again, we’re talking about making a machine behave like a human here—AI, that is.

As a result, when driving a Tesla, you’ll note that the car’s sensors pick up traffic-related items, like cones, lights, signs, and even trash cans, but often do not “see” parked cars—they don’t appear on its screen.

Indeed, with Autopilot engaged, my Model Y always insists on being in the middle of the lane and often gets too close to a parked lane-straddling vehicle. In fact, it likely would have even crashed a few times if I hadn’t intervened.

Tesla crashes

And that’s why you might have run into stories about Tesla crashing into a parked truck or even a police cruiser. The most famous is probably how a Model 3 crashed into an overturned truck on a Taiwanese freeway in the video above.

In this case, chances are the car’s sensors saw the truck as a stationary object but interpreted it as an overpass or something that it had not been trained to perceive as a danger or didn’t come to that conclusion fast enough.

(Keep in mind that all Teslas have Emergency Braking that’s engaged by default even when Autopilot is not in use.)

And it hit close to home, too. A couple of days ago, a friend of mine crashed their Model S into a disabled truck in the middle of a freeway in the San Francisco Bay Area.

(Details of the incident are still unclear, but everyone walked away.)

And that brought us to a new thing that Tesla just did with its latest Model Y and Model 3: the removal of RADAR.

Tesla sans RADAR: What’s the deal?

Over Halloween, I took my kids trick-or-treating with a neighbor who had just bought a 7-seater Model Y for a month. Naturally, we chatted about our cars, and I was surprised at how displeased he was with his Autopilot.

“It’s just terrible,” he said and complained how the feature kept refusing to engage or disengaging for no reason rather frequently, or it would slow down “out of nowhere”, by which he probably meant the said phantom braking. And that was when the removal of RADAR hit me.

Indeed, starting in May 2021, Tesla began its “Tesla Vision” approach to self-driving by relying solely on cameras and AI. In other words, it uses a computer system to interpret the car’s 8 cameras’ visions to detect objects as the only object-detecting solution.

In doing so, all Model Y and Model 3 shipped in May and later no longer have the front RADAR sensor.

On this change, Elon Musk said Tesla Vision would deliver “mind-blowing” results and bring Tesla’s self-driving to the highest full autonomous level (that’s level 5) by the end of 2021—that’s a month and a half from now.

Well, that’s not going to happen. It’s just impossible.

Again, Elon has said many things.

Curious about the change, before this post, I test-drove briefly a new Model Y sans RADAR, and its Autopilot was indeed sub-par compared to mine.

It wasn’t terrible—it’s still far better than almost any ICE car’s Cruise Control—and first-time buyers will still be impressed—but it was definitely worse than those with RADAR.

The new car often felt unsure of itself, and I often couldn’t, on the first try, engage Autopilot when going at a certain speed or being on a particular patch of a road, which where I could on my RADAR-enabled Model Y.

There were also other subtle and not-so-subtle things—you’ll notice if you have driven a Tesla with RADAR before. So, the Tesla Vision right now is a significant downgrade—it indeed has less hardware.

Tesla says the new approach will take some time for the system to learn. Data is being collected and analyzed each time a similar Tesla Vision car makes a “mistake.” And that might be true—things will get better.

But even when or if Tesla Vision works 100%, keep in mind that, again, physically, cameras have many issues:

- They can’t handle the weather, such as rain, snow, or dust.

- Mud, dead insects, small pieces of trash, or even bird drops can cover them at any given time. (This has all happened to my Model Y.) By the way, none of the cameras have a wiper.

- Cameras don’t work well, if at all, when facing a bright source of light, such as the sun or high beams of the opposite traffic.

So the point is, you can’t depend on Tesla Vision at all times. At best, it only works on beautiful days, and you can’t count the best-case scenario as success.

In other words, if what Elon said about Tesla Vision were true, it’d be accurate only in certain situations—more than enough for some demos or YouTube fan videos. The car would need RADAR, LiDAR, or a new type of sensor for the rest.

Again, self-driving should be about leveraging multiple types of sensors to consistently deliver the best safety results, not betting on a single type combined with some software voodoo.

Otherwise, the human driver will still be required as a backup, and I don’t want to have to keep my fingers crossed while driving.

The takeaway

Driving automation is a matter of degree.

On any well-balanced, low-tech vehicle, you can count on it to “drive itself” for a few miles on a straight, empty road. But if you want a car to take you home reliably right now, even when you’re sleeping, get a taxi! That’s the automation level we don’t have yet—it’s just really hard.

That’s especially true for all existing Tesla cars, despite the fact that the company is ahead of the game—or has managed to appear that way. So far, its Full Self-Driving feature (or even Autopilot) has been hype at best and a lie at worst, considering the names.

In the next couple of years, you might be able to have a Tesla drive by itself most of the time under your supervision—that’s driving automation level 3 (out of 5). Anything more than that is simply impossible in all existing Teslas.

Don’t get me wrong. I’m a Tesla fan, and I enjoy driving my Model Y. But I look at its Autopilot (or FSD) as what it is: A helpful driver-assistance feature. Nothing more.

In short, Tesla’s self-driving feature on existing cars is an excellent tool if you don’t count on it as driving automation—it likely never will be.

On the other hand, if you treat it as a driverless feature, you’ll get yourself into trouble, possibly even worse, and you only have yourself to blame.

A machine doesn’t feel pain. Humans do. Don’t fool yourself till it hurts.

more info on why you probably should check your batteries before cruisin’ along {…}

Please respect the comment rules, Richard. Maybe you should read and do your home work first before sharing anything-more here.

Thanx Dong, good insight into so called self driving automation. Musk is a scandalous showman and is doing a great disservice to the concept of assisted driving by hyping up expectations way beyond what can safely be achieved/ Having said that, I nearly went on to do a PhD in motorway traffic control many years ago. Indeed the concepts I looked at have only recently last decade been implemented. Its all to do with regulating speed on a highway vs traffic density. Its well known that a highway has a maximum speed/density capacity. If its exceeded you get the phenomena of shock flow – stop start traffic jams for no apparent reason.

I have always felt that the so called outer fast lane of a 3 lane uk motorway was badly used leading to reckless driving behaviours. I would advocate using these lanes as a kind of road train system, where vehicles , coaches, haulage can be linked together by radar distance sensing separation and the speed of the train is controlled by a central monitoring station. You say 200ft for radar sensors so this would give say a limit of 60mph to allow for stopping distance.

Imagine a stream of dozens of VIP coaches invisibly tethered traveling between major city centers. These coaches could peel off and travel independently to sub terminals around the main city. So much cheaper and quicker to install than a fix high speed rail viz our disastrous HST2 coasting £100 billion. All this on repurposed highways. I hope this gives a snap shot of the failed vision (glad I didnt pursue that career, would have been a lifelong series of disappointments (political in construction lobby interference)

Sure, Robin. Your idea about those coaches is great, but it won’t work in the US. 🙂

If attempting all cameras, a much more advanced camera setup is currently needed for the most safe level 5. More wipers, more washer fluid nozzles, more cameras to get angles like when emerging from tight side road. And a LCD glass outer layer to selectively mask sun and headlights.

Even then, the need for inspecting tires, taking car park tickets and removing hardened bird droppings may still prevent level 5 so also need a free Teslabot for those who bought “Full” Self Driving.

The bot is a bit extreme but you got a point there, Dar.

Great article. I’m a mechanical engineer. Always wondered how they get away with their misrepresentation of what’s actually possible in reality. Maybe they’ll be peddling 6 monthly “booster” shots in the future to fix bugs/viruses.

Thanks, Rud. Your analogy is a bit off, but I catch your drift. 🙂

Very very unfortunate, misinformed and unscientific comparison, Rud, but anyway… I’ll go back to topic.

Personally I’m not a fan of Tesla’s philosophy, especially concerning repairs and all that, but I’m on Tesla’s boat regarding the camera-only approach.

Here is why:

– Sonar: not useful for self-driving (short range = too late to react; only helpful for parking, which seems like something cameras can handle)

– Radar: very slow response times and low resolution = no added benefit versus cameras

– LiDAR: only helpful when cameras don’t need help: when weather conditions are good. Totally useless (and actually make things worse) when cameras most need help: when weather conditions are bad. Then… why put them at all?

So all in all… adding Sonar, Radar or LiDAR is totally redundant if the camera’s AI is intelligent enough, and Tesla’s SW seems to slowly be getting there.

Not sure how many other driving automation brands are doing with NON-premapped roads, so if anyone has any insight, I’d appreciate it.

Thanks for the input, Heff. I mentioned all of your points in the post. The issue with cameras is that they can be blocked. It’s raining/snowing season now, and I have experienced that multiple times in the past weeks.

To be reliable, at the very least, each camera needs a wiper.

For safety reasons, RADAR and LIDAR will help, but it’s always a matter of how they are used.

Well… the thing is that Radar and LiDAR won’t actually help, and when most needed, they can actually be counterproductive.

Putting wipers in is something that can be done, and seems more like an aerodynamics choice.

If you do lose your cameras to dirt, Radar and LiDAR are basically useless, so autonomous driving will be disabled either way.

If you want to regain autonomous driving, stop the car somewhere safe, clean the cameras, and continue your journey.

Obviously that’s not level 5, but Sonar, Radar and LiDAR would definitely not help achieve level 5 in a situation with no cameras.

You’re right. The camera is a must-have sensor, period. But like the other two, it, by itself can’t get to level 5, not even three. We need a combo of multiple sensors working together via software. You might want to read the entire post. 🙂

“We need a combo of multiple sensors working together via software. You might want to read the entire post.”

And which sensors would that be? I might be missing something, because I already explained why Sonar, Radar and LiDAR won’t be the ones that help achieve Level 5.

I believe that All existing Tesla’s will get there.. (as long as Tesla still exists as a company)

The Promise with the FSD package is that your car will be upgraded as needed until FSD is a reality. There is no guarentee that actual FSD will happen in the next 3,5 or 10 or more years but when it is ready Tesla will retrofit every Tesla with a FSD package for free. They have already upgraded Computers and they are now replaceing cameras in older cars . Now will you own your car when it is ready ? I personally wouldn’t pay a premium until they had a full level 3 system in place at a minimum.

Me neither, especially FSD is now available via subscription. By the way, upgrading hardware means the car is no longer the “existing car” anymore. And I’m pretty sure you’d want a new car then with better sensors already in place. 🙂